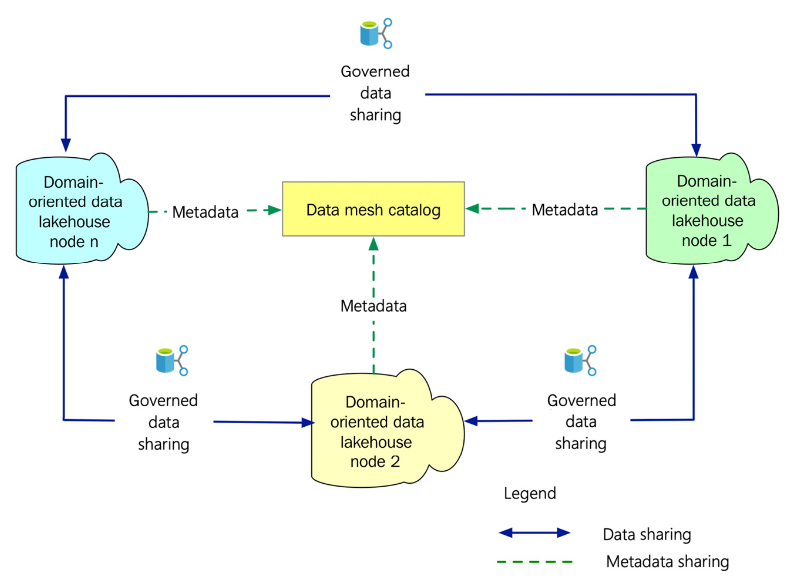

The conceptual architecture of data mesh

To Nha Notes | July 22, 2022, 11:15 a.m.

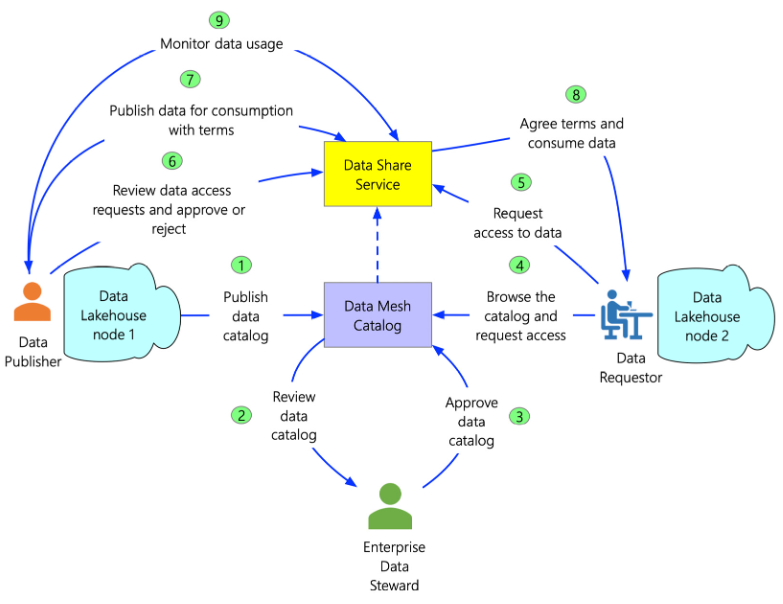

The data sharing workflow in data mesh

The preceding figure illustrates the workflow for a scenario of data sharing between two nodes in a data mesh architecture:

- Firstly, the data publishers, who have the data ownership, publish the metadata of the data lakehouse node into the data catalog.

- The enterprise data mesh steward reviews the published catalog to ensure that it is aligned to the organization's governance framework.

- The steward then approves or rejects the published catalog contents. If approved, the catalog is updated with the metadata.

- When a data requestor from one node requires the data from another node, the data requestor browses the data mesh catalog to identify the data of interest.

- Once the data of interest is identified, the data requestor requests the data from the hub through the Data Share service.

- The request for data access is routed to the data publisher. The data publisher reviews the request and approves or rejects the request for data access.

- If the request is approved, the data publisher shares the data with the data requestor through the Data Share service, which enables data sharing between the nodes. As in the hub-spoke architecture, the terms of data usage are also clarified.

- Finally, the data requestor reviews the terms of data usage. Upon the acceptance of the terms, the data requestor can start consuming the data usage.

- The data publisher constantly monitors the data usage pattern through the Data Share service.

The source is from the book Data Lakehouse in Action